Professor Dawn Woodard Aids the Diagnosis of Performance Problems in Large-Scale Distributed Computing Systems

Mark Eisner (ORIE); April 29, 2011

A recent lengthy outage crisis at an Amazon data center in Virginia that provides information processing services for a large number of websites has put a spotlight on the reliability of an approach to service known as "cloud computing."

Amazon, Microsoft, Google and other organizations have pioneered the

business of establishing data centers with as many as hundreds of

thousands of computer servers that run software for applications such as

search, email processing, e-commerce, and data storage for many

companies. Such large, distributed computing systems are at the

heart of cloud computing, so-called because a cloud symbol is often used

in diagrams illustrating the connection of individual computers to the

internet. Cloud computing is forecast to become a multi-billion dollar business.

In a recently published paper, ORIE Assistant Professor Dawn Woodard

and Moises Goldszmidt of Microsoft Research describe a new method of

exploiting statistical techniques to rapidly, accurately and

automatically match a currently occurring performance lapse, or

"crisis," which in the extreme could lead to an outage, to previous

crises whose causes may be known or unknown.

The method can be used as part of a process to chose the optimal

intervention (taking into account the uncertainty of the match) thereby

minimizing the expected cost of the crisis, a cost which may include

payouts to clients for violation of service agreements as well as client

dissatisfaction.

The new method is based on measurements ("metrics") that are routinely monitored for each server at a data center. For example, the level of utilization of the server's central processor and memory, the number of emails awaiting filtering for spam, and other key performance indicators are continually tracked and aggregated over short, fixed-length time intervals.

|

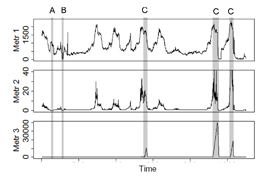

| Three metrics, with crisis intervals of types A, B and C highlighted. |

When graphed, the median time series of such metrics, taken

across all servers, exhibit characteristic behavior in times of crisis,

with the pattern of behavior of the collection of metrics differing with

different causes. Crisis types may include configuration errors,

overloads, spikes in workload, and even disruption in power to the data

center, each with different patterns, or 'fingerprints.' Outages

from a crisis are typically avoided by fail safe and redundant

mechanisms, but even if they are, degraded performance is at best

inconvenient and at worst, expensive.

Woodard and Goldszmidt's approach is designed to carry out computations during the time intervals between crises to automatically group past crises into clusters, fully employing an approach known as Bayesian inference. The computation can start with input from human experts. It then applies values of the observed metrics for each crisis in the past to update the estimate of the number of different types, or clusters, into which crises can be gathered and the best cluster to assign to each crisis.

When a new performance lapse is detected (sometimes even before it is

technically identified as having started), the values of the metrics

associated with it are incorporated into the input data and a new set of

clusters is very rapidly and accurately generated, thereby identifying

which previous crises the current crisis most resembles. This

information can lead to early resolution of the crisis.

To test the value of their method, Woodard and Goldszmidt applied it

to data from one of Microsoft's Hosting Services for the first

four months of 2008. During that time, twenty-seven performance

problems of varying severity occurred. Experts were able to

diagnose the causes of most of them. More than 90% of the time the

new clustering method determined the correct cluster membership, on

average doing so based on the portion of the data available in some

cases even before the technical start of the crisis. Moreover the

technique identified each recurrent problem in record time.

The method "dramatically outperforms a state-of-the

art...clustering method," according to the authors. Beyond speed

and accuracy, it has several advantages, including the fact that it does

not grow in complexity with the number of servers, as distributed data

centers get larger and larger. It can be used to distinguish the

system status metrics that are most strongly associated with crises of a

particular type, it "learns" a mapping from the metrics to the best

intervention, it can forecast the evolution of a crisis as it occurs,

and potentially it can be used to model not just the evolution but how

the evolution depends on the intervention that is taken.

Woodard was an intern with Goldszmidt at two different companies: a start up and HP Labs, before and during her Ph.D. studies. "As a statistician, my job is to facilitate applied work by developing and applying sound methods for learning from data," Woodard said. "I work closely with collaborators in applied disciplines in order to contribute to those disciplines, and to keep my work grounded in reality. In the case of this collaboration, Moises is an expert both on distributed computing systems and on statistical methods, which greatly facilitated our project. He had already done a huge amount of analysis of the data, both exploratory and statistical, before we began."

Goldszmidt said "the goals for this technique are to facilitate the

job of the data center operators and maximize the availability and

reliability of cloud services. Professor Woodard has a unique

combination of expertise in both statistics and computer science that

moved this research effort to the next level. I am excited to

continue our collaboration on this and related topics."